Can machines change human behavior? We asked ourselves this daunting question at the beginning of 2020, which led to an exciting collaboration with Jigsaw, a unit within Google that forecasts and confronts emerging threats, and their team behind Perspective API. This culminated in a successful experiment with 50k users, on nearly half a million comments across top-tier publishers such as AOL, Salon, Newsweek, Sky Sports, and more. In a word, ‘yes’ – it is possible.

We achieved this through ‘Real-Time Feedback,’ a feature built on the premise of a known theory in behavioral sciences that proposes positive reinforcement and indirect suggestions as ways to influence the behavior and decision making of groups or individuals.

Each time we found a comment in violation of site guidelines, we nudged the user to reconsider their message so they can participate in the conversation, hence reducing the load on human moderators—and improving the quality of conversations. We believed this would encourage users to participate in online conversations in a healthier and engaging way.

Since then, more users have responded to a nudge

As WIRED stated in their OpenWeb feature, ‘It’s a rosy view of the internet’ – and we are excited to share that this view is evolving and getting better. We spent the last few months scaling this feature across our network of publishers, each with different audiences and needs.

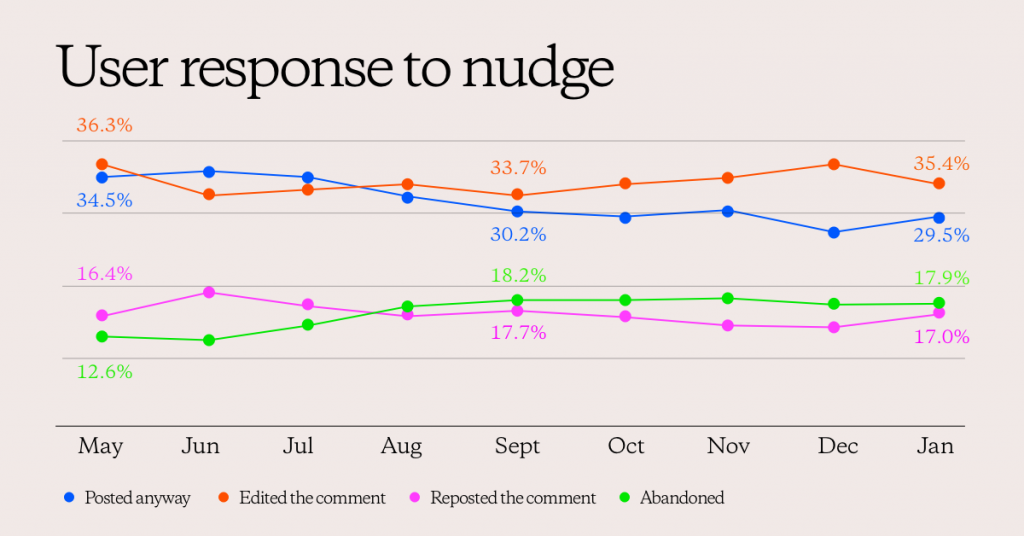

Firstly, we reviewed the performance of publishers from the first study over time—May 2020 through January 2021. Good news! As the chart depicts below, we continue to see a healthy positive trend in the percentage of users who edit their comments, and the share of comments successfully published.

It’s evident that users are learning over time and paying attention to the nudges presented to them. As the share of users who ignore the nudges or repost decreased, we saw the corresponding lift in users either editing or trolls/spammers leaving the conversation.

Secondly, we have now scaled this feature across 41 publishers and over 1.9 million comments received a real-time nudge in January 2021. The results are encouraging— they reinforce the effectiveness of Real-Time Feedback and consistency of toxicity models across different environments, including the US 2020 Election cycle.

Latest results of nudges

(compared to the initial case study results)

- 33% of users edited their comment upon nudge (-2.9%)

- 30% of users posted the comment anyway (-20%)

- 21% of users left without trying to edit or comment further (+75%)

- 16% of users tested the feature by reposting (-11.1%)

From 33% of users who chose to edit their comment, 58% changed it to be immediately permissible — over 7% lift from the initial test.

The conversion of users editing or reposting upon being nudged saw a slight dip in this cohort of publishers, but nonetheless it is encouraging to see the percentages are holding closer to the initial results as we scale.

On the other hand, nudges put a brake on the instantaneous outburst. A large share of users abandoned the effort, indicating friction deters the trolls/spammers or users who are just having a bad day. It also highlights the learning that this avenue of expressing themselves is not worthwhile and their unwillingness to adapt to the publisher guidelines.

Gentle nudges can change the course of a conversation

Since our publication, other industry members have started using a similar feature albeit with different language and implications to commenters (tech giants Twitter, Instagram and YouTube have built their own versions). The consensus is that gentle nudges have the potential to change the course of a conversation. By providing transparency and education throughout the user engagement journey, digital publishers can positively impact overall community health.

As our partner Salon aptly described in this WSJ story, “A civil comments section encourages reader engagement, increasing opportunities to show readers ads or convert them into subscribers. And a nudge mechanism—rather than one that automatically blocks inappropriate language—encourages debate to remain on the site rather than move somewhere like Twitter.”

As we continue to roll out this feature across 1000+ publishers globally, we believe assessing user intent is needed to more accurately interpret these results. This can be done through further analysis of the edit behavior and user surveys. Stay tuned for more updates in 2021.